AI generated 3D models using images and text prompts for input (results included)

Creating 3D models has traditionally been a time-consuming and labor-intensive process. However, recent advancements in artificial intelligence (AI) have made it possible to generate 3D models automatically, based on 2D images or text descriptions with a fraction of the cost of traditional methods. For example, creating a 3D model of a shoe manually from scratch will take approximately 18h and set you off approximately $1k. However, using an AI tool to generate a 3D low poly-count model of the same shoe will cut the whole process down to minutes and cost you around $1. Of course, with AI technologies being in the very early stages of development, the trade-off here is the quality of the 3D models.

Alpha3D is one such platform that leverages AI technology to create 3D models from just a single shot image or text input.

In this article, we will look at the capabilities of both Alpha3D’s image and text to 3D generative AI. But before we dive in, let’s have a quick look at the process of generating 3D models with AI looks like:

AI-generated 3D models using a single shot 2D image input

Below you’ll find examples of 3D models generated with Alpha3D AI. The single-shot image used as input to generate the 3D model can be seen on the left.

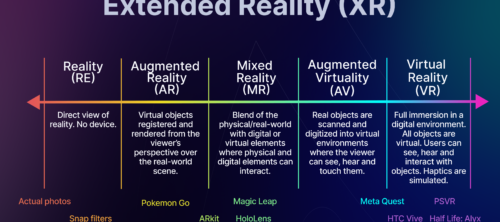

View all the below AI-generated 3D models in augmented reality (AR).

AI-generated 3D models using text prompt input

For the below batch of 3D models, we used text prompts as input directly on Alpha3D platform. After receiving the text prompt, Alpha3D generates a selection of input images to pick from. We have highlighted the images we selected to convert into the 3D models below.